Corporate Analysis and Business Intelligence Lack Data Quality

What do companies lack in BI?

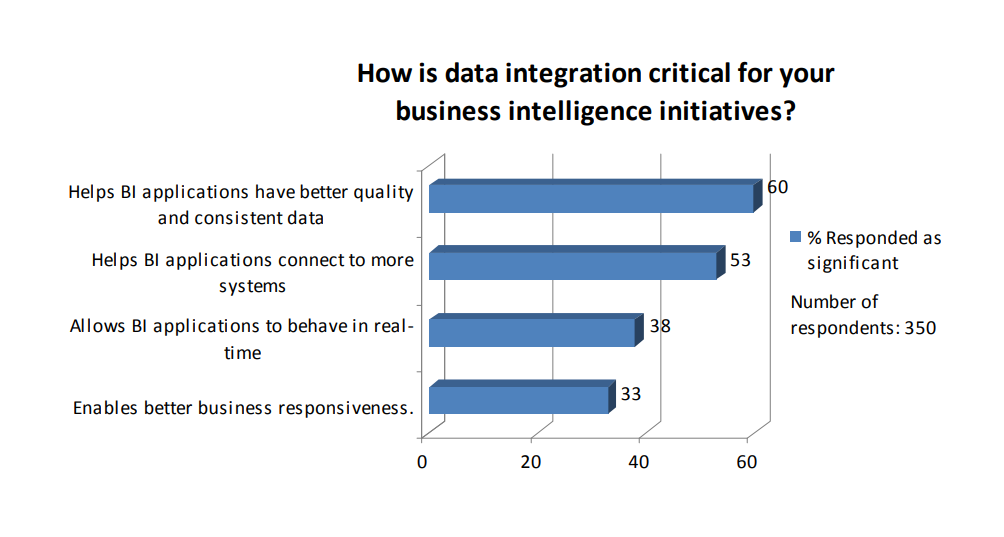

As much as companies are talking of committing to business intelligence (BI) principles in their daily work, the concept of BI still seems to be tricky. The one thing that requires the utmost attention in all cases is data quality.

According to a Gartner report, more than 25% of critical data in Fortune 1000 companies is inaccurate, incomplete, or duplicated, and three-quarters of large enterprises will make little to no progress toward improving data quality until 2010.

Whether your data is already ‘dirty’ and needs to be reviewed on regular basis, or whether there is no systematic process for checking it within your company, sooner or later you realize that something about your data needs to be fixed. Some companies prefer to conduct regular automatic check-ups, others choose to apply filtering techniques before the information even enters internal databases, one way or another, enough solutions already exist to help you make that first step into the world of BI and make it right.

Karthikeyan Sankaran, one of BEYE bloggers, recently posted his list of the top 10 things BI lacks. Aside from data quality, he singles out such foundational aspects of BI as the problem of structured and unstructured data, valuation techniques, predictive analytics / data mining, technology limitations, simulations, on-demand analytics, etc.

The list will vary slightly from one company to another, but making one and working toward perfecting your business intelligence strategy through it is certainly helpful. No one can tell you how rewarding it is, you can only feel it for yourself while gradually putting “taken care of” or “implemented” next to each item from the list.

Fixing data before delivering it to decision-makers

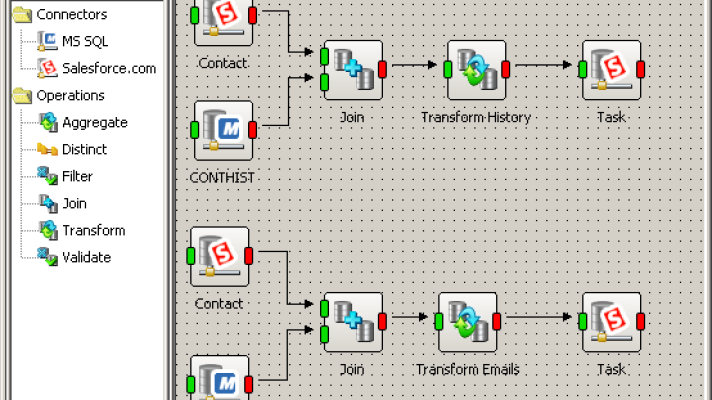

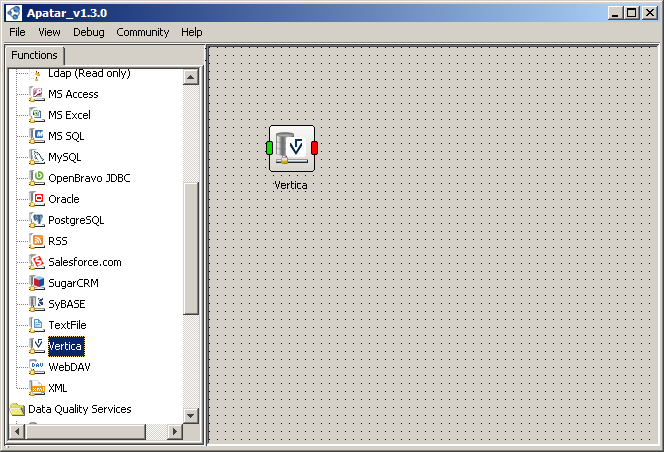

That’s why last month Apatar announced connectivity to the Vertica Analytic Database, a high-performance, grid-based column-oriented database management system used for analytics and business intelligence. The new connector enables both developers and business users to migrate data between the Vertica Analytic Database and third-party apps without any custom coding and allows for this data to be filtered, validated, and cleansed.

The Apatar connector for Vertica

The Apatar connector for VerticaWhile huge amounts of corporate data are scattered across enterprises and becoming outdated too rapidly, the connector for Vertica can help companies synchronize their most critical data before delivering it to decision-makers.

“Businesses are asking more questions against ever-growing volumes of data from multiple sources, so we are excited about our relationship with Apatar,” said Colin Mahony, Senior Director of Business Development for Vertica. “Vertica’s Analytic Database enables companies to store terabytes of information with blazingly fast query response times. With Apatar’s visual designer and integration tools, it becomes extremely easy to extract and load large volumes of data into Vertica quickly for rapid analysis.”

Prior to entering into the Vertica Analytic Database, data can be filtered, validated, and cleansed using Apatar’s data quality capabilities. Now both Vertica users and administrators can de-duplicate data received from multiple sources, verify e-mail and postal addresses, check customers’ socioeconomic parameters, and more.

The truth is that most data integration projects fail—after they exceed allocated budgets or cannot be delivered in time—and never get built. By using data quality and integration tools, enterprises can accelerate decision-making and provide the completeness of their most critical information timely, before it’s too late.

Check out the Vertica connector tutorial for more.

Further reading

- Solving the Problems Associated with “Dirty” Data

- Data Quality: Upstream or Downstream?

- Poor Data Quality Can Have Long-Term Effects