KubeEdge: Monitoring Edge Devices at the World’s Longest Sea Bridge

Addressing limited resources

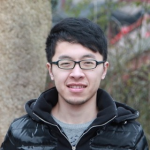

In 2021, Kubernetes adoption increased by 67%. According to a Cloud Native Computing Foundation (CNCF) report, edge computing was one of the main drivers behind Kubernetes usage. While adoption among back-end developers grew by 4%, it rose by 11% among edge developers.

The CNCF forecasts an annual rise of 19% in the Internet of Things (IoT) sector, and more edge computing workloads will be put on Kubernetes, further accelerating this growth. Additionally, more and more engineers are starting to favor serverless technologies due to its lightweight nature (48% at the edge vs. 33% at the back end).

Edge computing was developed in response to the rapid growth of the IoT, where organizations have hundreds or even thousands of devices each continuously transmitting data to and from the cloud. Having so many devices transmitting data simultaneously not only results in higher latency due to network congestion, but also an increase in bandwidth and operational costs.

10% more edge developers utilized Kubernetes in 2021 (Image credit)

10% more edge developers utilized Kubernetes in 2021 (Image credit)Compared to typical data centers, resources are much more limited at the edge. Therefore, many organizations running apps at the edge rely on containers, which are lightweight and have a small footprint. Managed with Kubernetes, this model helps to maximize resources and overcome the limitations. In a recent blog post, we covered how Osaka University reduced edge computing costs by 13% using Kubernetes and artificial intelligence (AI).

During KubeCon North America 2021, Yin Ding (ex–Pure Storage) outlined additional challenges when running Kubernetes at the edge:

- The network at the edge has limited bandwidth and can be unstable.

- Edge nodes should be autonomous to prevent interruptions due to the lack of connectivity.

“Due to an unstable network, the edge can be disconnected from the cloud. So, the edge needs to run autonomously. Also, the control plane should not evict or migrate applications when a disconnect occurs.” —Yin Ding

The challenges mentioned are just a few that KubeEdge, a CNCF incubator project, is aiming to resolve. For instance, this tool monitors edge devices at the world’s longest sea bridge between Hong Kong, Zhuhai, and Macao.

What is KubeEdge?

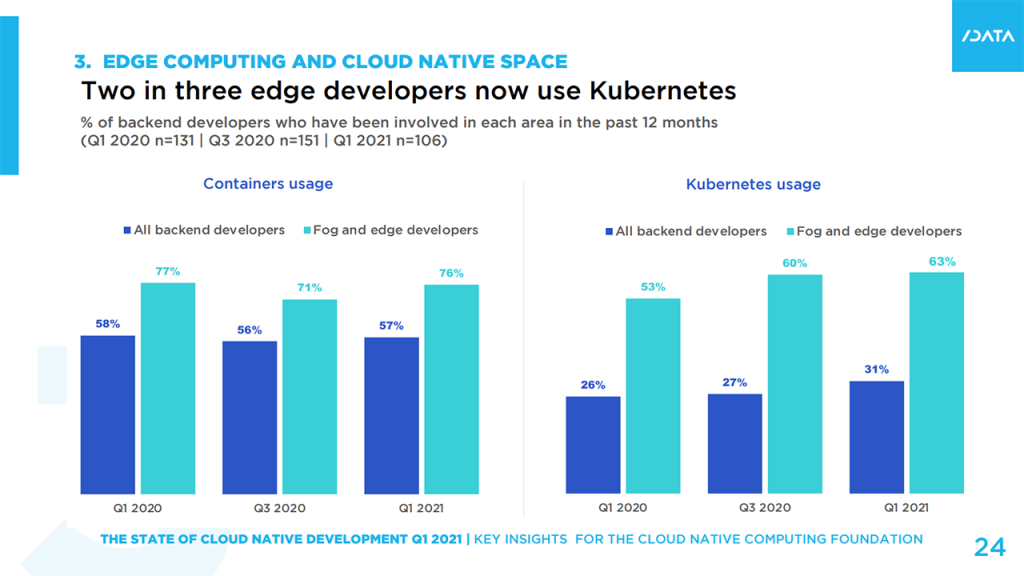

Started by Huawei in 2018 and later donated to the Cloud Native Computing Foundation in 2019, KubeEdge is a system for extending native containerized application orchestration capabilities to hosts at the edge. Built on Kubernetes, the project provides core infrastructure support for networking, application deployment, and metadata synchronization between cloud and edge.

KubeEdge enables:

- Kubernetes-native support. Developers can use the Kubernetes API to deploy apps, manage devices, as well as monitor app and device status on edge nodes similar to clusters in the cloud.

- Edge device management. Edge devices are managed through Kubernetes-native APIs implemented by custom resource definitions (CRDs).

- Edge autonomy. Edge nodes keep running even when the network between the cloud and the edge is unstable or offline.

- Low resource readiness. EdgeCore, the edge client, is extremely lightweight (approximately 70 MB).

“KubeEdge supports the Kubernetes-native API. Developers can use the same API to deploy to the cloud and to the edge.” —Yin Ding, Google

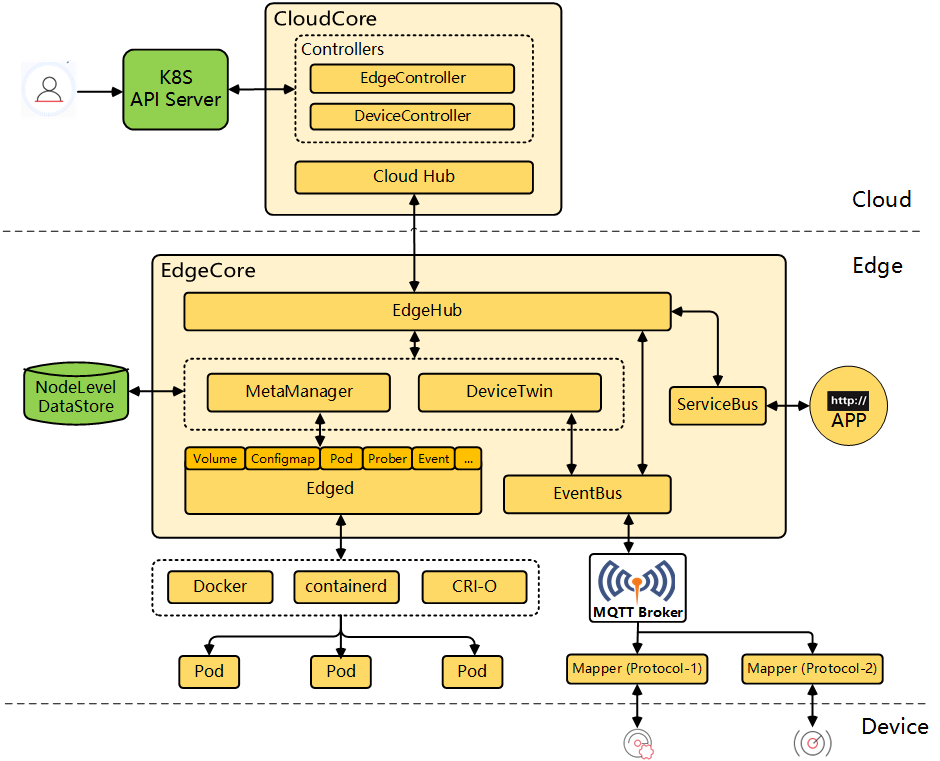

The architecture of KubeEdge spanning the cloud, edge, and devices (Image credit)

The architecture of KubeEdge spanning the cloud, edge, and devices (Image credit)

How does it work?

KubeEdge consists of two parts—CloudCore and EdgeCore. Both of these parts are further made up of additional components. CloudCore comprises:

- CloudHub. A web socket server responsible for watching changes at the cloud side, caching, and sending messages to EdgeHub.

- EdgeController. An extended Kubernetes controller for managing the metadata of edge nodes and pods, so that the data can be targeted to a specific edge node.

- DeviceController. An extended Kubernetes controller for managing devices, so that the device metadata can be synced between edge and cloud.

In its turn, EdgeCore is divided into:

- EdgeHub. A web socket client responsible for interacting with Cloud Service for the edge computing.

- Edged. An agent that runs on edge nodes and manages containerized apps.

- EventBus. A Mosquitto client to interact with MQTT servers, offering publish and subscribe capabilities to other components.

- ServiceBus. A client to interact with HTTP servers, enabling cloud components to reach HTTP servers running at the edge.

- DeviceTwin. A module responsible for storing a device status and syncing it to the cloud.

- MetaManager. The message processor between Edged and EdgeHub. It is also responsible for storing and retrieving metadata to and from a lightweight database, such as SQLite.

KubeEdge components (Image credit)

KubeEdge components (Image credit)At KubeCon North America 2021, Kevin Wang from Huawei explained how deploying an app in KubeEdge is different from a standard Kubernetes deployment. According to Kevin, in a “vanilla” Kubernetes deployment, after the scheduler finds a feasible node for a pod, the Kubelet on the corresponding node will spin up the containers of the pod.

On the other hand, in KubeEdge, CloudCore receives all the notifications from the API server and forwards the pod information to the corresponding nodes located on the edge. Next, MetaManager in EdgeCore sends the pod data to SQLite and then forwards the information to Edged, a lightweight Kubelet.

“From a pod life cycle perspective, KubeEdge is similar to the original Kubernetes behavior. Each component runs as normal, and they do not need to worry about the other steps added by KubeEdge.” —Kevin Wang, Huawei

Monitoring the longest sea bridge in the world

The Hong Kong–Zhuhai–Macao bridge is an open-sea fixed link that spans the Lingding and Jiuzhou channels. It is considered to be the longest sea crossing in the world, stretching for 55 km.

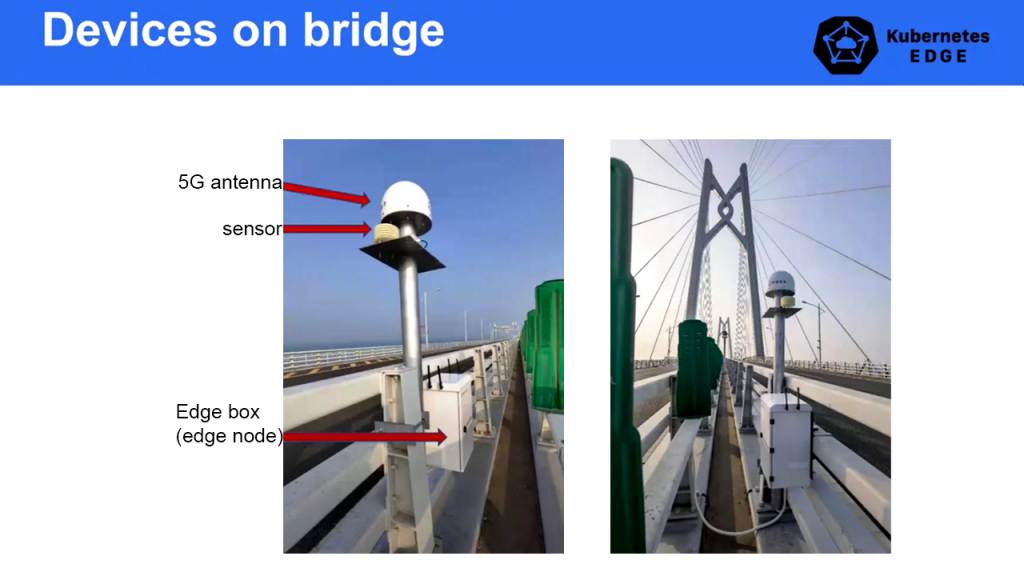

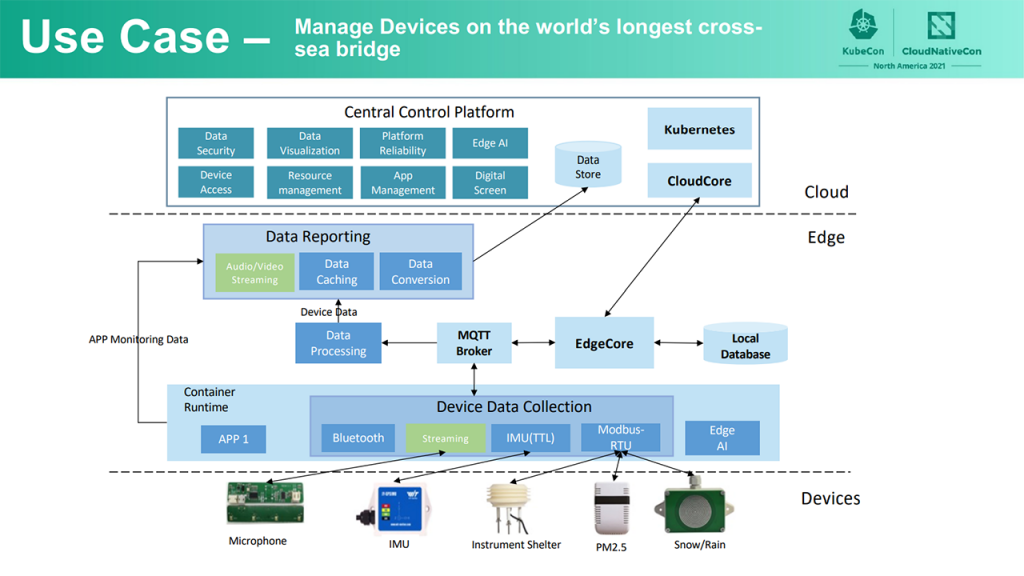

During KubeCon Europe 2021, Huan Wei from Harmony Cloud explained that monitoring the bridge was challenging due to its length and the massive amount of IoT devices required. In order to create a monitoring system for the bridge, a combination of technologies, including 5G communication, BeiDou positioning solutions, and KubeEdge, were deployed.

IoT devices on the bridge (Image credit)

IoT devices on the bridge (Image credit)According to Huan, each edge node on the bridge collects up to 14 different types of sensor data. Those include light intensity, carbon dioxide, atmospheric pressure, noise, temperature, humidity, PM 2.5 (fine particulate matter), PM 10 (particulate matter), rain and snow, acceleration, angular velocity, Euler angle, magnetic field, and sound. In addition, KubeEdge enables business apps, as well as device mapper and AI interference programs, to be deployed at the edge.

“All the data collected from the sensors is processed locally at the edge. Any abnormal information can be found through real-time artificial intelligence interference running at the edge. All the edge applications are managed and distributed at the cloud, supporting dynamic operation and maintenance at the edge.”

—Huan Wei, Harmony Cloud

Monitoring the Hong Kong–Zhuhai–Macao bridge (Image credit)

Monitoring the Hong Kong–Zhuhai–Macao bridge (Image credit)According to Huan, these are the shared lessons learned after deploying KubeEdge to monitor the bridge:

- Data collection and reporting frequency should be proportional to the resources available in EdgeCore.

- Since the network at the edge is not always stable, there should be enough storage space allocated at the edge to enable EdgeCore to cache data. Once the network is restored, the cached data can be uploaded to the cloud.

- When KubeEdge is deployed at scale (over 100,000 edge nodes), it is important to have an update strategy, where the cloud continuously scans for new edge nodes and automatically installs apps.

KubeEdge v1.9.2 was recently released on March 22, 2022. Moving forward, some of the long-term plans include simplifying cross-subnet communication and improving both storage and security at the edge. Anyone interested in KubeEdge can track its development in the project’s GitHub repo.

Want details? Watch the videos!

Yin Ding and Kevin Wang provide an overview of KubeEdge.

Huan Wei explains how the world’s longest cross-sea bridge is monitored with the help of KubeEdge.

Further reading

- Osaka University Cuts Power Consumption by 13% with Kubernetes and AI

- Machine Learning Constitutes 65% of Kubernetes Workloads

- Denso Delivers an IoT Prototype per Week with Kubernetes

About the experts

Kevin Wang is Lead of Cloud Native Open Source Team at Huawei. He has also been a contributor to the CNCF community since its beginning. Kevin is a cofounder of multiple projects, including KubeEdge, Karmada, and Volcano. He has contributed to Kubernetes upstream for years and spends 100% of his work and focus on wider open-source cloud-native community development.

Yin Ding is Engineering Manager at Google, where he leads the Kubernetes Hardening team. He has more than 15 years of experiences in the large-scale and distributed computing area. Yin has led numerous cloud-native efforts and projects in house, being an active member of various open-source communities. Previously, he worked at Pure Storage, VMware, Expedia, Microsoft, etc. Yin is an early founder of the KubeEdge project.

Huan Wei is Chief Architect at Harmony Cloud, where he designs and implements private cloud construction. He has 10+ years of experience in software design and development. Huan has expertise in cloud computing, microservices, AI, and data warehousing. At present, he is committed to the design and implementation of edge computing for many large enterprise customers from the telecommunication, finance, transportation, electric power, and other industries.