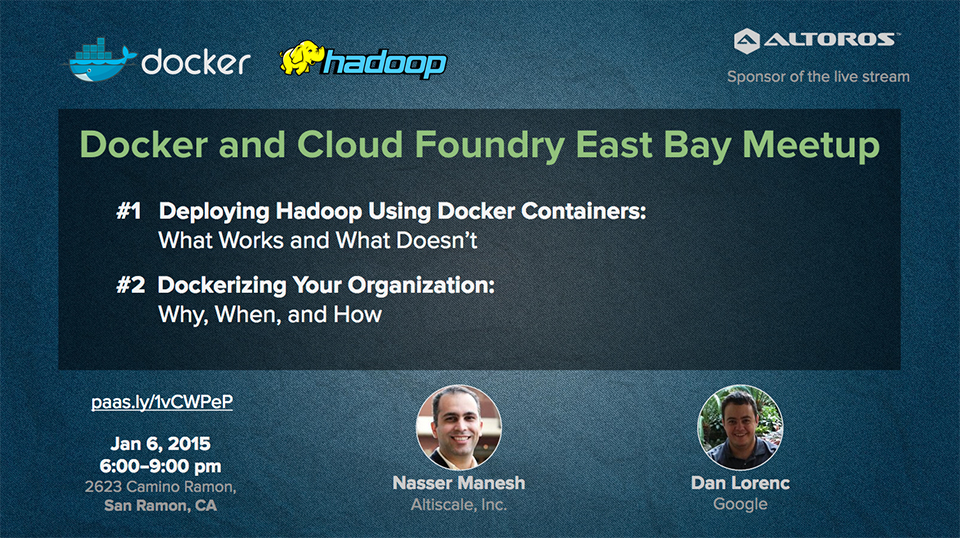

Deploying Hadoop with Docker: Why, When, and Lessons Learned

Why Docker? When to use?

Like a virtual machine (VM), Docker runs processes in its own, preconfigured operating system. However, the approach used by Docker is somewhere in the middle between running an application on a physical server and full hardware simulation that VMs can provide.

At a recent meetup organized by Altoros, Dan Lorenc of Google explained when, why, and how one can adopt Docker for development practices.

“If you use Docker, I think the best killer feature is that it provides natural separation of concerns between your developers and your Ops team. So, your developers can focus on just writing and shipping code that will run inside a container. You don’t have to care how containers are going to get run in production…You can completely rewrite the application from Java to Python and your Ops team probably won’t notice that.” —Dan Lorenc, Google

Dan then explained how Docker can save effort and time. If you’re trying to combine multiple servers on one VM to get better hardware realization, you have to worry about dependencies and configuration conflicting. Docker changes this and makes it a lot easier by bundling the packages configuration and source code of your application into one Docker image. Your app runs with the same exact dependencies as it will run in production. So, it is much harder to get a scenario where the code runs in your sandbox but fails on production.

Dan Lorenc, Google

Dan Lorenc, GoogleAccording to Dan, we get the most out of Docker when we run an app that is deployed quite often. There is not really much point in investing a lot of time ‘dockerizing’ something that you only upgrade and deploy once a year.

Dan thinks that a lot of confusion is caused by how much Docker actually does. That’s why many teams struggle with understanding what Docker is and how they can use it to set up a productive flow. The tip is that we don’t have to completely understand how to use all of these things to get a good implementation of Docker and be more productive.

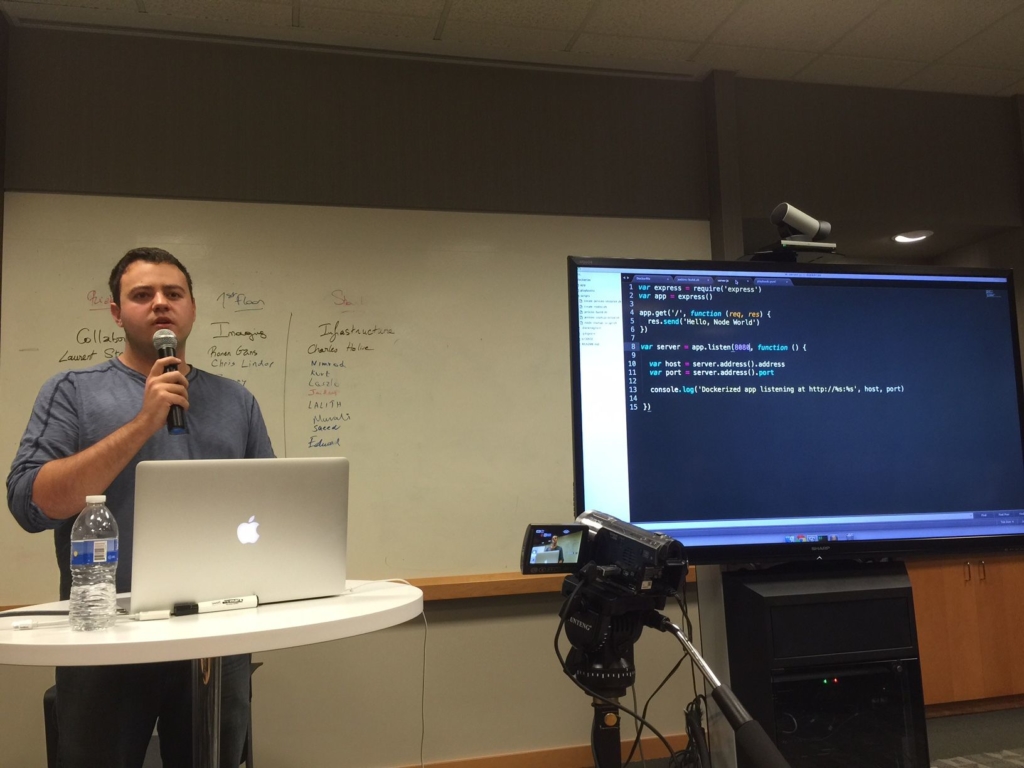

At the event, Dan also demonstrated continuous delivery with Docker, Jenkins, and Ansible (check out the video below).

Hadoop in Docker: things to know

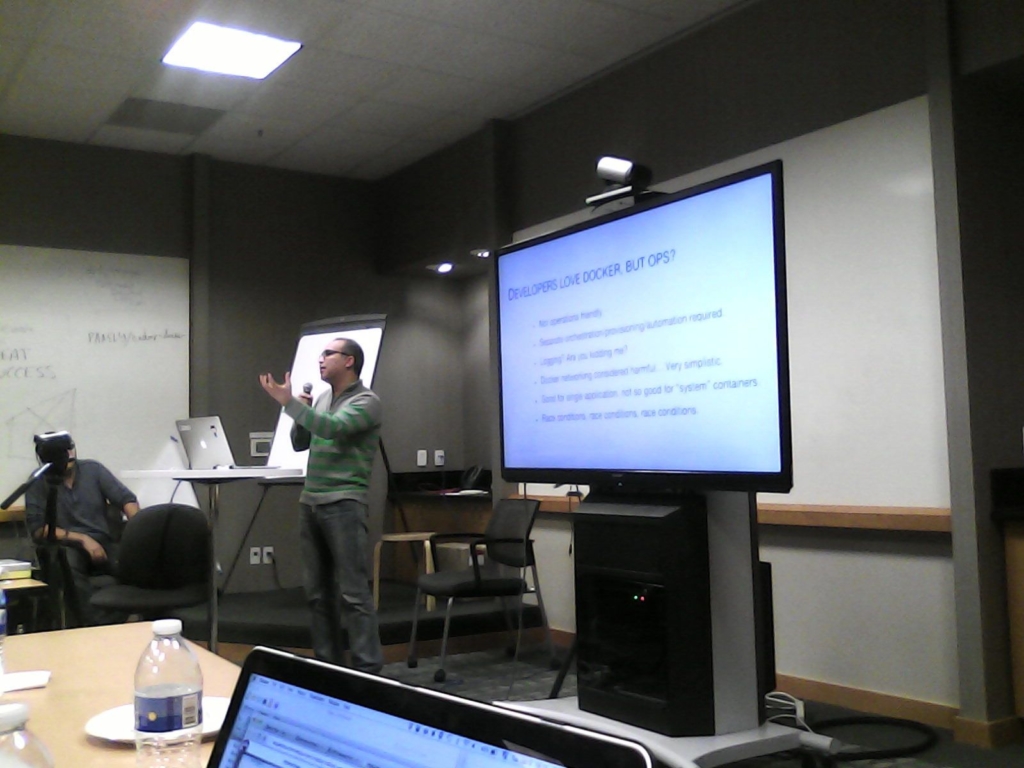

In his turn, Nasser Manesh of Altiscale shared the company’s experience with deploying multi-tenant Hadoop clusters using Docker. (Altiscale was looking for the opportunity to run Hadoop in a multi-user mode configuration and extract maximum efficiency out of the system.) The talk covered the differences between containers and VMs, as well as addressed typical issues with configuration, monitoring, troubleshooting, etc.

Nasser Manesh, Altiscale

Nasser Manesh, Altiscale“How do you partition and reallocate your machines to different jobs from different customers? Hadoop itself has built-in capabilities for this. However, they are not what we need, especially when it comes to a multi-tenant environment where you want tight security. You don’t want customer A to see anything about customer B…You can use containers, which is your way of doing a lightweight version of virtualization. Lightweight is important here, because every CPU cycle counts. Every CPU cycle that is not used on a customer job is being used by your hypervisor as a core. So, we’re trying to minimize those cores.” —Nasser Manesh, Altiscale

The question was, “Is it Docker in Hadoop or Hadoop in Docker?” Nasser reviewed both options.

Want detais? Watch the video!

These are the slides presented by Nasser Manesh:

Further reading:

- By 2025, Containers, not VMs, Will Run 1/2 of the Cloud Workloads

- How to Deploy Spring Boot Applications in Docker Containers

- Docker Containers, Cloud Foundry, and Diego—Why Abstraction Matters

About the experts

Dan Lorenc is Software Engineer at Google experienced in building developer tools with Python, Go, and .NET. He has worked at different companies ranging from small startups to Microsoft and Google. Dan is currently bringing the power and flexibility of Docker to Google App Engine. Dan also wrote the Google Compute Engine driver for the new Docker Machine tool.

Nasser Manesh is Senior Engineer, Infrastructure/Operations, at Altiscale, having 25+ years of experience with UNIX, infrastructure, distributed systems, automation, and production engineering/operations. He has founded startups for consumer Internet, mobile, photography, and art. Today, Nasser is focused on big data infrastructures, Hadoop core (HDFS/YARN), Chef, Linux cgroups, and Docker at scale.