A Comparison of Redis Cloud, ElastiCache, openredis, RedisGreen, Redis To Go

In most performance comparisons, Redis—an open-source key-value cache/store—is mainly treated as a caching-only solution. Others are only focused on a single provider. However, customers are interested in the deeper utilization of built-in data types and server-side operations. In production, you may have several loads that query your database simultaneously—with different types of tasks.

Altoros announced the study that evaluates Redis performance in more complicated conditions. It combines two different types of queries, both simple and complex, generated concurrently. In this blog post, we share some of the main findings.

Redis-as-a-service providers available

We compared the following Redis-as-a-service offerings available on Amazon AWS.

- Redis Cloud Standard (by Redis Labs)

- Redis Cloud Cluster (by Redis Labs)

- ElastiCache (by Amazon)

- openredis (by Amakawa)

- RedisGreen (by Stovepipe Studios)

- Redis To Go (by Exceptional Cloud Services / Rackspace)

Using a single AWS EC2 instance, each vendor provided the best-performing Redis-as-a-service (RaaS) plan. All of the server instances were located in the same region as the benchmarking client.

Workload scenarios in use

Most benchmarks are bound to CRUD operations only, evaluating basic Redis capabilities. To test its advanced functionality, we put six popular RaaS offerings under three totally different scenarios.

The simple workload was compiled from the SET/GET operations in the proportion of 1:1 and utilized different pipeline sizes (4 and 50).

The complex workload comprised of the ZUNIONSTORE operations on four sorted sets. Within this scenario, an increased number of client threads consequently increased the number of requests for Redis to process, evaluating scalability of the RaaS solutions.

The combined workload imitated a real-life use case, where Redis-as-a-service providers, running concurrently, were benchmarked under CRUD operations and server-side operations on built-in data types.

Reality: how parallel loads behave

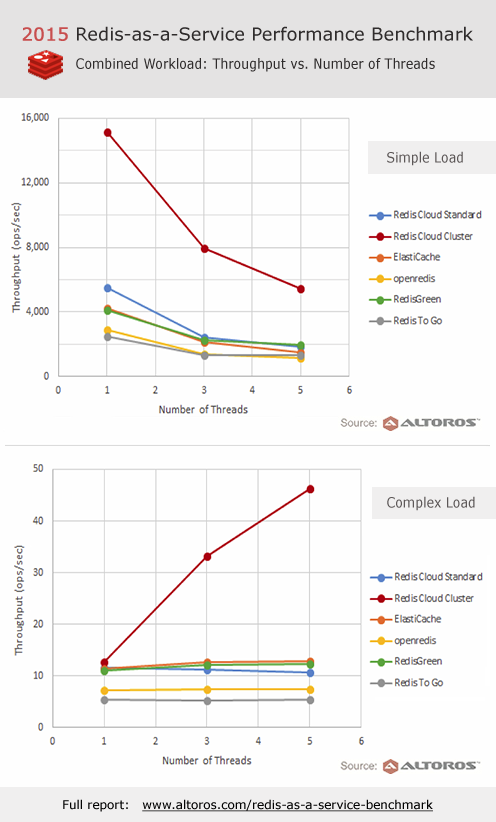

The differences between the RaaS solutions were most clearly demonstrated during the combined scenario, when launching simple and complex workloads synchronously. Besides, as the number of threads was increasing, some intriguing findings were discovered in the behavior of simple and complex operations. The results are seen in the pictures below.

The performance benchmark under the combined workload

The performance benchmark under the combined workload

The main insights based on the performance results of the combined workload:

- While throughput of complex operations was either increasing or stayed almost the same, performance of simple operations degraded as the number of threads increased. This behavior was noticed across all RaaS providers.

- Redis Cloud Cluster (by Redis Labs) outperformed the rest of the RaaS offerings in most use cases. This was the only solution that still scaled linearly in the combined scenario.

- Taking into account how complex operations influence the performance of simple scenarios, Redis-as-a-service solutions may behave quite in a distinctive manner under different types of load. It brings in the need for evaluation of a particular case—according to its complexity, type of load, number of threads, etc.

For more results with performance diagrams and tables on exact latencies and throughput data, check out the full version of this Redis-as-a-service benchmark.

Further reading

- Technical NoSQL Comparison Report 2019: Couchbase Server v6.0, DataStax Enterprise v6.7 (Cassandra), and MongoDB v4.0

- NoSQL Performance Benchmark 2018: Couchbase Server v5.5, DataStax Enterprise v6 (Cassandra), and MongoDB v3.6

- 2017 NoSQL Technical Comparison Report: Cassandra (DataStax), MongoDB, and Couchbase Server