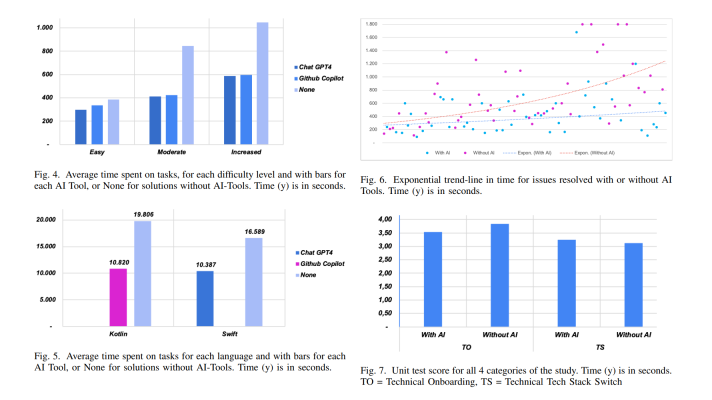

TensorFlow for Manufacturers: Building a 3D Rendering Engine

Machine learning marches its way into a more and more broader pool of industries. The capabilities of TensorFlow are applied to an array of tasks from predicting wildfires to generating content.

At the recent meetup in San Francisco, the attendees learnt what pitfalls may come up when developing a rendering image and how TensorFlow helps out. In addition, the speaker from Autodesk exemplified how the company employs TensorFlow to categorize 3D data, enable robots to assemble structures, etc.

Using geometric representations to build a graphic app

Andrew Taber, a software engineer at Intact Solutions, demonstrated how to build graphic applications (e.g., a rendering engine) with TensorFlow and better understand structure and interconnection of neural networks.

Starting with types of geometric representations, Andrew enumerated three of them, as well as pointing out its advantages and downsides:

- Polygonal (meshes)

Parametric (a boundary representation), which represent geometry as an image of a function. It also simplifies the process of accessing local data by walking in any of the tree directions. Still, it is hard to query point membership or perform boolean operations.

For example, if you want to model a complex shape, it will require a lot of parametric patches, resulting in basically “exploding memory cost for things like microstructures.”

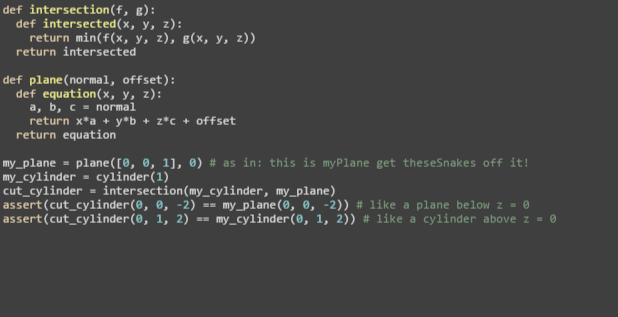

Implicit (a function representation), which represents geometry as a kernel of a function. In contrast to parametric, with this type one can easily run boolean operations.

For example, one can model arbitrary complexity with low storage overhead.

Challenges to address

When building a rendering engine, one has to be aware of some pitfalls:

- It is time-consuming.

- User interaction is not intuitive.

To help it out, Andrew made use of signed distance functions—a special case of implicit representations. In the course of the project, an additional constraint was added at every point: the magnitude of a function underestimates the distance to the nearest surface. It is important, as there are some surfaces that do not have closed-form distance functions.

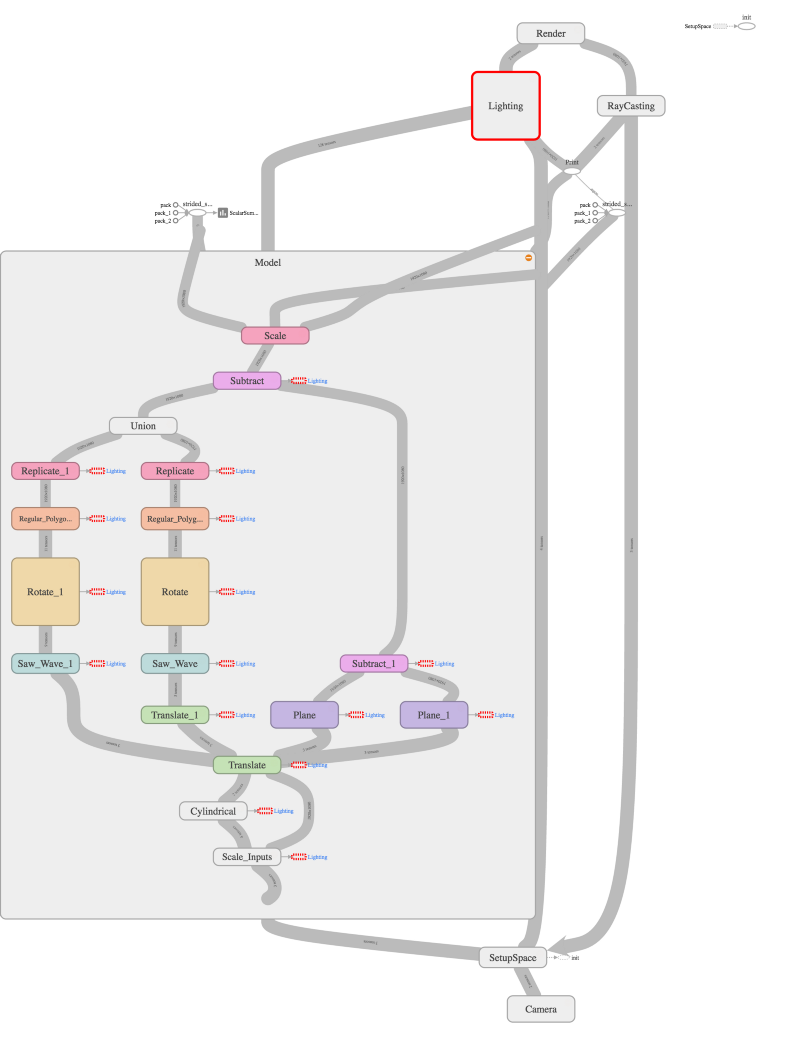

At the high level, the code above demonstrates how to build a syntax tree in the language of operations and geometries.

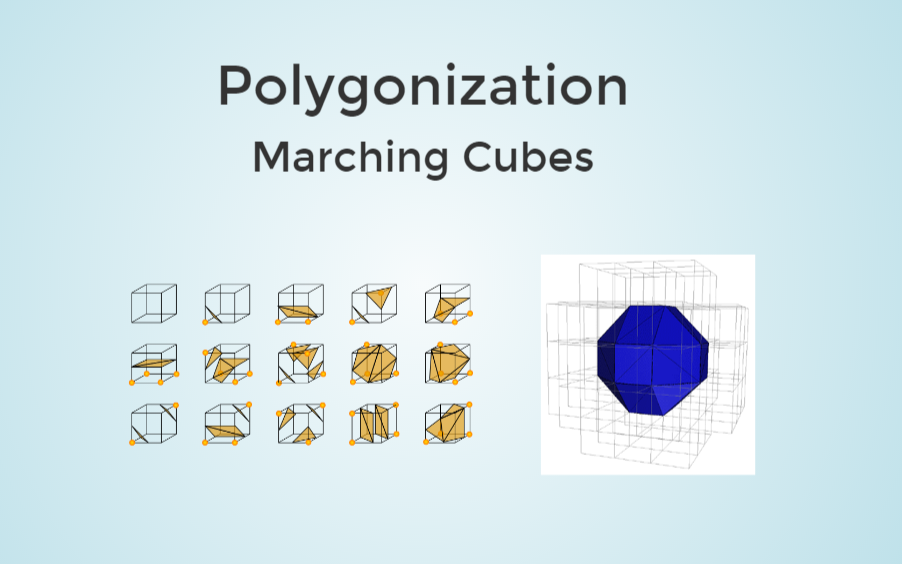

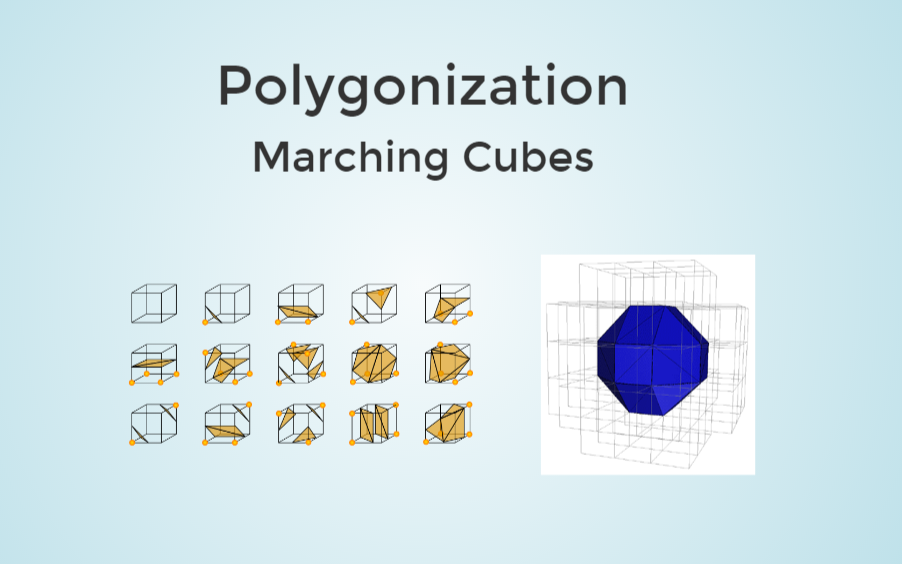

Polygonization vs. raytracing

Meanwhile, we need to render signed distance functions, which can be done either by means of:

- polygonizing (i.e., to populate a grid with values of the function and use this grid to approximate the surface with triangles)

- raytraycing (i.e., to set up a camera in space and send a ray to intersect a surface for each pixel. Then, draw the intersected points to the screen.)

The architecture challenge lies in building algebraic expressions in such a fashion that allows for:

- reusing computation results automatically

- performing efficient computations on large arrays of input data

- parallelizing

Polygonizing with TensorFlow

Here steps in TensorFlow to enable:

- common subexpression elimination

- automatic differentiation

- compilation to GPU code

As long as polygonization is a simpler method to render implicit surfaces, Andrew chose it.

With the marching cubes algorithm to generate triangles given a volumetric grid of function values and TensorFlow variable class to initialize coordinate tensors, one gets this during polygonization:

This is what Andrew has got as an output:

(Note: When running on an Amazon GPU instance, it took Andrew about a minute to render a frame in 1080p, which is not competitive in contrast to raytracing via GPU shaders. However, with the image tensor in TensorFlow, one can backpropagate the error.)

In addition to all the automation TensorFlow brings in, the library gives a helping hand when one needs to debug a huge computation tree. This can be easily achieved by wrapping each function with a call to tf.name_scope to visualize each different conceptual piece of the tree.

For more details, you can read Andrew’s blog post on the topic or browse through his presentation. You can also watch the video from the meetup below.

How Autodesk makes use of TensorFlow

Mike Haley, Senior Director of Machine Intelligence at Autodesk, provided an insight into four use cases of how TensorFlow is applied at the company:

- learning predictive and generative models to categorize large amounts 3D data

- designing graphs

- controlling robots to assemble structures

- delivering computational fluid dynamics and thermal simulation tools

Check out the video below for his session. As well, don’t forget to join our group to get informed about the upcoming events.

Want details? Watch the video!

Further reading

- The Diversity of TensorFlow: Wrappers, GPUs, Generative Adversarial Networks, etc.

- Building a Keras-Based Image Classifier Using TensorFlow for a Back End

- How TensorFlow Can Help to Perform Natural Language Processing Tasks

About the experts