Running Amazon’s Container Services on AWS Fargate

Two container orchestration options on AWS

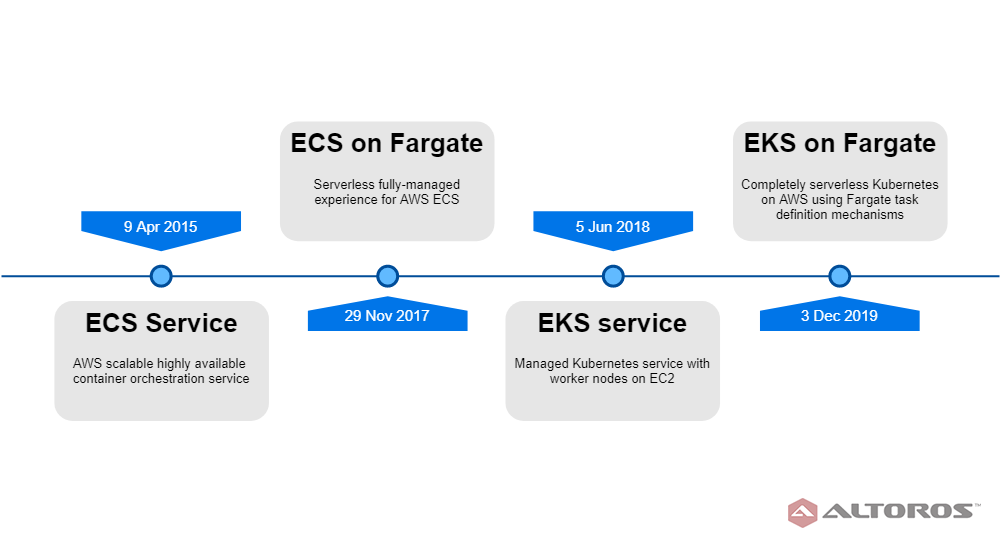

Amazon has offered container orchestration tools for years. Since 2015, the company has been providing users with Elastic Container Service (ECS), a secure, scalable, production-grade container management platform. In June 2018, Amazon also introduced Elastic Kubernetes Service (EKS), a platform that enables engineers to run workloads without the need to independently maintain a K8s control plane.

Previously, the only option for running ECS was EC2, but to remove the need to provision and manage servers, the company released AWS Fargate, a serverless compute engine for containers, in November 2017. Starting from December 2019, AWS Fargate is available for EKS, as well.

Amazon’s products release dates

Amazon’s products release datesTo better understand the difference between running Amazon EKS and Amazon ECS on AWS Fargate, our Kubernetes engineers explored the compute engine’s capabilities and limitations to get firsthand experience.

What is AWS Fargate?

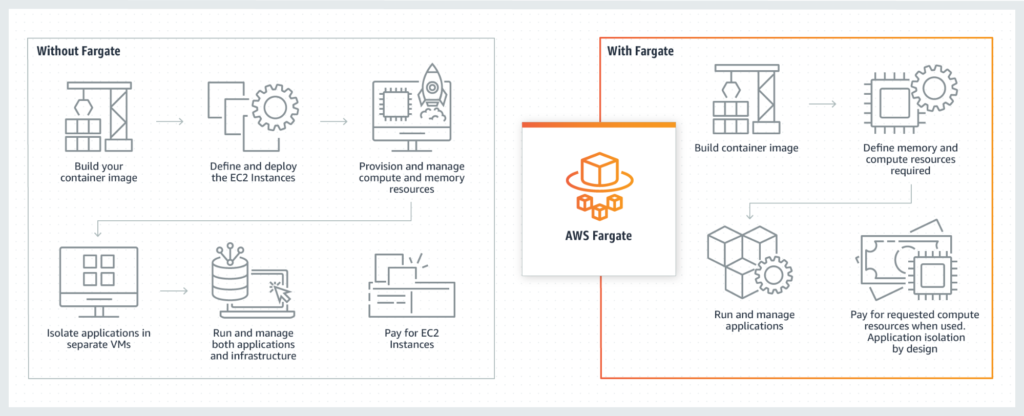

AWS Fargate enables operators to run containers without having to perform such mundane tasks as provisioning, managing, configuring, or scaling clusters of virtual machines. Although this decreases the control granularity, it is a common trade-off for all the managed services.

AWS Fargate removes the need to provision servers (Image credit)

AWS Fargate removes the need to provision servers (Image credit)AWS Fargate uses Firecracker—a Linux Kernel–based virtualization technology for fast provisioning of lightweight virtual machines. When running a workload, AWS Fargate automatically creates a virtual machine that closely matches the requested compute/RAM resources. The table below shows the vCPU and memory combinations available for the resources running on AWS Fargate.

On AWS Fargate, each node runs in its own dedicated kernel runtime environment and doesn’t share CPU, memory, storage, or network resources with other nodes. This ensures isolation and improved security.

Amazon ECS on AWS Fargate

Amazon ECS serves as one of the first production-grade scalable container orchestration services. Prior to AWS Fargate, Amazon EC2 was the only launch option available for ECS. Users had to create virtual machines to run workloads, and it was the operators’ responsibility to patch, upgrade, and otherwise maintain the machines. After AWS Fargate was introduced in November 2017, users could choose between this newer and fully managed, but more expensive solution, or an older and affordable alternative hosted on their EC2 instances.

While writing a Task Definition, an operator selects a number of supported launch options that will be used for this particular task. During the launch phase, an operator should define the launch option. AWS Fargate will automatically provision a virtual machine that can handle a workload. An operator cannot see or interact with this virtual machine directly—there are no options to either SSH to this machine or affect the task execution in any other way. This also means that there is no way to execute commands (like that) inside the container.

In Amazon ECS, there are more differences between launch options compared to Amazon EKS. Workloads and node definitions are inside a single JSON object, so a user has to define the launch type together with the workload. Additionally, it is possible for operators to specify how a task is compatible with either AWS Fargate or Amazon EC2. In this case, an operator will still have to decide on the launch type and manually migrate workloads in case they change. An example of the ECS configuration file is shown below.

{

"family": "",

"taskRoleArn": "",

"executionRoleArn": "",

"networkMode": "host",

"containerDefinitions": [

...

],

"placementConstraints": [

{

"type": "memberOf",

"expression": ""

}

],

"requiresCompatibilities": [

"FARGATE"

],

"cpu": "",

"memory": "",

"tags": [

...

],

"pidMode": "task",

"ipcMode": "host",

"proxyConfiguration": {

...

},

"inferenceAccelerators": [

...

]

}

One of the downsides of Amazon ECS is that it is not cloud-agnostic—a user cannot easily migrate the workload to another cloud or on-premises environments.

Amazon EKS on AWS Fargate

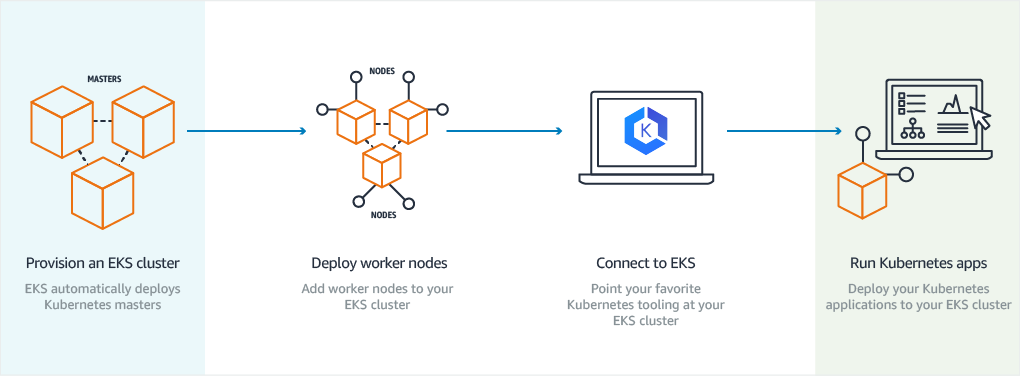

Amazon EKS is a managed service that enables workloads to run without the need to independently maintain a Kubernetes control plane. In EKS, Kubernetes control plane instances run across multiple availability zones to ensure high availability. In addition, the solution automatically detects and replaces unhealthy control plane instances, as well as provides automated version upgrades and patches.

When Amazon EKS was released in June 2018, it supported Amazon EC2 as the only compute option for its worker nodes. Users had to create an autoscaling group, deploy Kubernetes components to the nodes, as well as update and patch nodes manually. Even given the fact that node bootstrapping was done via the startup scripts, there was still a huge maintenance overhead.

Amazon EKS on Amazon EC2 (Image credit)

Amazon EKS on Amazon EC2 (Image credit)AWS Fargate provides optimal compute capacity on demand to containers running as Kubernetes pods on Amazon EKS. With AWS Fargate, pods run with the exact compute capacity they requested, enabling each pod to run in its own virtual machine–isolated environment without sharing resources with other pods. In this case, users can focus on designing and building applications, rather than managing the infrastructure, attempting to make it up-to-date for the cloud.

For Amazon EKS, an operator has to define the AWS Fargate profile to specify which workloads will interact with AWS Fargate. For this purpose, you can specify selectors, so that some of the pods in a workload run on Amazon EC2, while the others run on AWS Fargate. The components of the AWS Fargate profile, which controls how Amazon EKS distributes the workload across the launch options, are shown in the configuration sample below.

{

"fargateProfileName": "",

"clusterName": "",

"podExecutionRoleArn": "",

"subnets": [],

"selectors": [

{

"namespace": "",

"labels": {

"KeyName": ""

}

}

],

"tags": {

"KeyName": ""

}

}

When AWS Fargate is configured, users can create a workload in Kubernetes. If the namespace and labels of the workload matches the selector defined in the Amazon Fargate profile, the solution will automatically use Firecracker to set up a new Kubernetes worker node that fits a particular manifest.

Each provisioned pod running on AWS Fargate is allocated 10 GB of the container image layer storage and additional 4 GB for each volume mount. The pod storage is ephemeral. This way, the storage is deleted after the pod terminates. Persistent storage, however, has an isolated volume and doesn’t terminate when the pod ceases to work.

Operators can deploy Amazon EKS on AWS Fargate either through the AWS Web Console. The other option is eksctl, an official Amazon tool designed for bootstrapping and managing Kubernetes on AWS.

Amazon EKS on AWS Fargate is fully compatible with open-source Kubernetes. However, there exist some limitations:

- Each pod can be allocated a maximum of 4 vCPUs and 30 GB of memory.

- There is currently no support for stateful workloads that require attached storage, such as Amazon Elastic Block Store.

- It is not possible to run Daemonsets, privileged pods, and pods that use HostNetwork or HostPort.

- The only load balancer you can use is Application Load Balancer.

Containers on Fargate: ECS or EKS?

AWS Fargate can be used as a launch option for both Amazon ECS and EKS services. Given its modular approach, there are not that many differences between ECS or EKS on AWS Fargate. Both options have nearly the same pros and cons, as well as features and limitations. However, there are also differences between ECS and EKS services themselves, which one should be aware of when weighing options.

Conclusion #1. Amazon EKS on AWS Fargate provides an end user with a trade-off between manageability and customizability. For a small fee, AWS Fargate will take all the responsibilities of managing and upgrading worker nodes on its side. This drastically decreases the usage complexity, as the operator’s work involves workload management and security provisioning, in particular. For testing environments, it has become possible for developers to create self-hosted Amazon EKS clusters. Prior to AWS Fargate, this process was complex, because developers had to be aware of how to deploy worker nodes, as well.

Conclusion #2. AWS provides better pricing for its Amazon ECS service, while Amazon EKS workloads can be safely migrated to any other Kubernetes instance anytime. When considering pricing, it might be reasonable to use Amazon ECS if your services are compatible with AWS. However, if you are migrating your workload to other cloud providers or to an on-premises environment, use Amazon EKS instead.

Conclusion #3. While ECS serves the same purpose as EKS, it is available only on the Amazon cloud, which significantly limits its application scope. Amazon EKS, in turn, is compatible with open-source Kubernetes. For users who use solutions for centralized management of Kubernetes clusters, it makes sense to go with EKS instead of ECS, since EKS exposes the same API as open-source Kubernetes. Amazon EKS on AWS Fargate is a good option for a user seeking a secure and scalable Kubernetes service and who does not want to spend time on infrastructure maintenance.

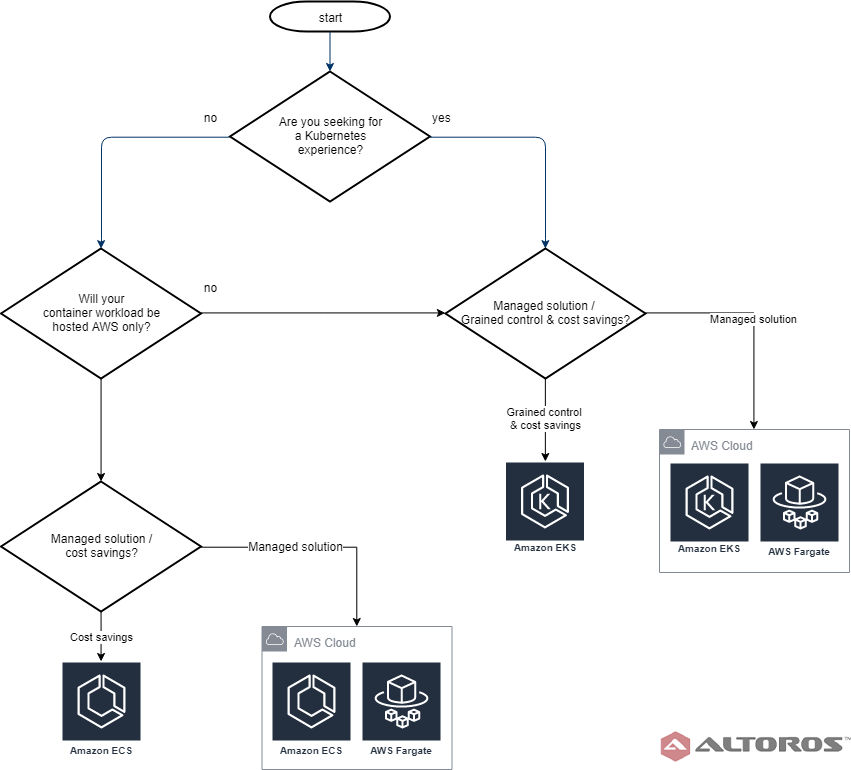

A flowchart of AWS container orchestration solutions

A flowchart of AWS container orchestration solutionsWhen choosing between launch options, it is important to note that there are trade-offs between AWS Fargate and Amazon EC2. For instance, mounting Amazon Elastic Block Store’s volumes is only supported by Amazon EC2. This means that persistent disks and, consequently, stateful workloads are not available in AWS Fargate. On the other hand, AWS Fargate automatically provides users with security mechanisms at the node level. One should still be responsible for keeping workloads secure, though.

Though AWS Fargate is initially more expensive than Amazon EC2, the continuous maintenance spent on Amazon EC2 can end up costing more in the long run. So, the final choice depends on the durability of your project, the services you need, and requirements for security and interoperability, especially if there is Kubernetes-centric tooling you want to leverage.

Further reading

- Installing Kubernetes with Kubespray on AWS

- Ensuring Security Across Kubernetes Deployments

- Automating Event-Based Continuous Delivery on Kubernetes with keptn

edited by Sophia Turol, Carlo Gutierrez, Valeryia Vishevataya, and Alex Khizhniak.