How to Implement Integration Tests for Juju Charms

If you think that implementing integration tests for Juju Charms—Canonical’s orchestration solution—is a trivial task, you’ll be surprised it’s not. Last month, I was involved in testing a collection of 30 mature charms and summarized my experience in recommendations on how to solve the challenges that arise.

The testing workflow

Juju Charms are recipes for deploying software and maintaining its life cycle. If anyone needs to implement a test, s/he will also need to understand how the software works as part of the implementation. The procedure of implementing an integration test for a charm comes down to these steps:

- Download the charm’s code

- Understand what the tool does

- Proofread the charm’s code

- Code the test inside the test directory

- Run the code and ensure that it runs as expected

- Report any bug detected while running a test

- Push the code and request it to merge into the main branch

To successfully complete this task, you need to be familiar with the internals of the Juju platform, understand how charms work, and have basic Python programming skills under your belt. Below, I share the tips—that worked out in my case—on how to implement integration tests for Juju Charms with no bumpy ride.

Tip #1: Using the DEB transparent proxy

To start working with the DEB transparent proxy, install apt-cacher-ng:

1 | apt-get install apt-cacher-ng |

Add config to environments.yaml, it should look like this:

environments: local: apt-http-proxy: http://10.0.3.1:3142

If you test this in the LXC environment in the c3.xlarge AWS machine, as I did, you would be amazed at the results. Without the DEB transparent proxy, the installation of packages (23.2 MB) to deploy the nagios charm took 11 seconds (2,048 KB/s). With the apt-cacher-ng DEB proxy, it took almost 0 seconds (48.7 MB/s).

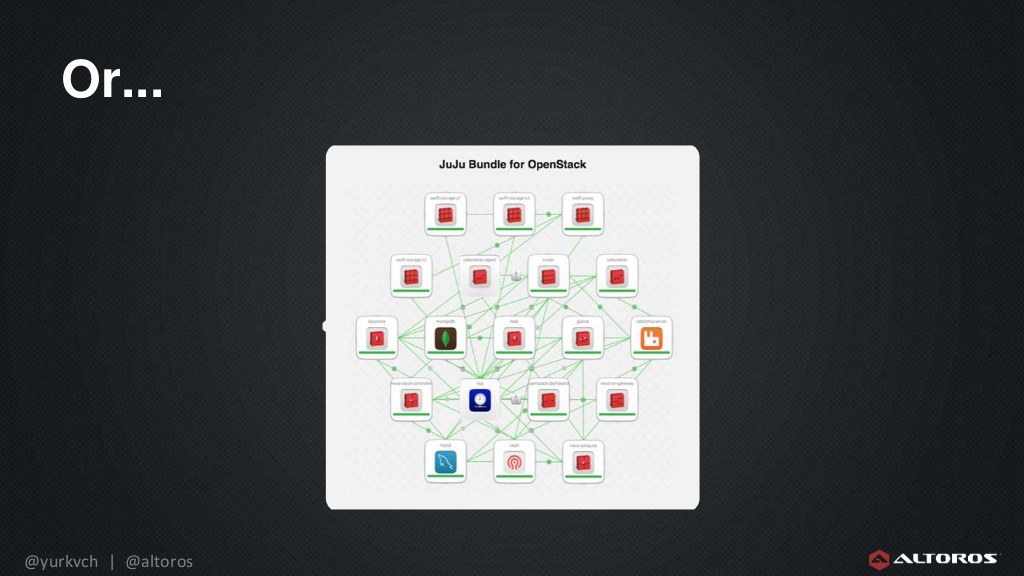

Saving 11 seconds seems like a trifle—still, if you are testing OpenStack…it means 2 minutes/run!

Tip #2: Run tests faster with the LXC test environment

The LXC provider outperforms AWS in machine supplying and speed (depending on your hardware). My computer has seen better days, so I decided to use the LXC provider inside the c3.xlarge AWS machine.

The difference in performance of running test is pretty significant. We tested two scenarios. The first scenario was the environment bootstrapping (running juju bootstrap). Using the Amazon cloud provider, it took 5 minutes 2 seconds to bootstrap the environment. By comparison, it only took 22 seconds with the LXC provider inside the c3.xlarge AWS.

The second scenario was to run Bundletester against the Meteor charm. In this scenario, using the Amazon cloud provider, it took 12 minutes to run all tests, while with LXC it took 3 minutes 18 seconds.

Here’s the results overview.

juju bootstrap | ||

| Bundletester trusty meteor |

Tip #3: Accelerate with BTRFS subvolumes and snapshots

If the provisioned machines are stored in the BTRFS partition, LXC will provision those machines much faster thanks to the cloning capabilities of BTRFS. The BTRFS file system features the subvolume technology, which allows for creating directories with the ability to make snapshots. A snapshot is a special type of a subvolume that contains a copy of the current state of some other subvolume. If you need more info about this, take a look at this article.

You can make use of this feature by adding a volume via AWS. After doing so, run these commands as root:

1 2 3 4 5 6 | mkfs.btrfs -m single /dev/xvdf # or the name of your device mv /var/lib/lxc /var/lib/lxc-old mkdir /var/lib/lxc mount /dev/xcdf /var/lib/lxc service lxc stop mv /var/lib/lxc-old/* /var/lib/lxc/ # Ignore moving errors |

Now, you can see how fast it is by testing it on LXC directly:

1 2 3 4 5 6 7 8 9 10 11 12 13 | root@ip-172-31-14-23:/home/ubuntu# time lxc-clone -s -o ubuntu-local-machine-1 -n ubuntu-local-machine-2 Created container ubuntu-local-machine-2 as snapshot of ubuntu-local-machine-1 real 0m0.056s user 0m0.000s sys 0m0.019s root@ip-172-31-14-23:/home/ubuntu# time lxc-clone -o ubuntu-local-machine-1 -n ubuntu-local-machine-3 Created container ubuntu-local-machine-3 as copy of ubuntu-local-machine-1 real 0m18.332s user 0m3.921s sys 0m2.996s root@ip-172-31-14-23:/home/ubuntu# |

The -s flag ensures that it uses snapshotting instead of copying. That means it is an extremely big improvement! It also reduces disk usage.

Tip #4: Save time with the AWS machine startup ‘button’

As I was using my large AWS machine to run the tests and there was no point in having this machine online all the time, I modified a script that starts and stops it. With this script, I no longer have to waste time entering the AWS Dashboard to start and stop my machine.

#!/usr/bin/python

#

# Based on work done by Andrew McDonald andrew@mcdee.com.au http://mcdee.com.au

#

import boto.ec2

import sys

import time

# AWS_ACCESS_KEY_ID

AKID = 'Your Access Key ID'

# AWS_SECRET_ACCESS_KEY

ASAK = 'Your AWS Secret Access Key'

# Region string

REGION = 'us-west-2' # your region

def print_usage(args):

print 'Usage:', args[0], 'stop|start <instance name>'

sys.exit(1)

def usage(args):

arg1 = ['stop', 'start']

if not len(args) == 3:

print_usage(args)

else:

if not args[1] in arg1:

print_usage(args)

else:

return args[2]

myinstance = usage(sys.argv)

conn = boto.ec2.connect_to_region(REGION,

aws_access_key_id=AKID,

aws_secret_access_key=ASAK)

if sys.argv[1] == 'start':

try:

inst = conn.get_all_instances(

filters={'tag:Name': myinstance})[0].instances[0]

except IndexError:

print 'Error:', myinstance, 'not found!'

sys.exit(1)

if not inst.state == 'running':

print 'Starting', myinstance

inst.start()

while inst.state != 'running':

print '...instance is %s' % inst.state

time.sleep(10)

inst.update()

print 'Instance started'

print 'Instance IP: %s' % inst.ip_address

print 'ssh -A -D 9999 ubuntu@%s' % inst.ip_address

else:

print 'Error:', myinstance, 'already running or starting up!'

print 'Instance IP: %s' % inst.ip_address

print 'ssh -D 9999 ubuntu@%s' % inst.ip_address

sys.exit(1)

if sys.argv[1] == 'stop':

try:

inst = conn.get_all_instances(

filters={'tag:Name': myinstance})[0].instances[0]

except IndexError:

print 'Error:', myinstance, 'not found!'

sys.exit(1)

if inst.state == 'running':

print 'Stopping', myinstance

inst.stop()

else:

print 'Error:', myinstance, 'already stopped or stopping'

sys.exit(1)

You can improve it by adding a cron job that shuts down the machine, if no Bundletester process is seen in a certain time gap.

You can start the machine just to run tests, too. So, the script can start the machine, copy the test to the machine, run it, extract the test output, and stop the machine automatically.

Tip #5: Track disk space shortage on LXC!

To never, ever run out of disk space should be among your priorities. Making the environment usable again is a real pain, once you reach this point. Ensure you have at least 20 GB in the partition, where ~/.juju is.

If you run out of disk space, this is what you should do:

- Force-destroy the environment

juju destroy-environment local -y --force- Then, if there is a hanged LXC environment, destroy it using

lxc-destroy

Tip #6: Install Python 3 dependencies

Remember to install the Python v3 dependencies. All the dependencies are usually installed using the tests/00-setup script. You need to invoke the Python 3 version of easy_install, which belongs to the python3-setuptools package.

This is what you need to add:

1 2 | apt-get install python3-setuptools easy_install3 |

Tip #7: Improve your test implementation workflow

As the days went by, I realized that the proposed workflow had many problems.

- It stuck me while I was waiting for a test run to finish. Since the integration tests take much more time than unit tests (minutes instead of seconds), it is hard to stay focused on the task. So, it was a big waste to wait minutes each time a test runs.

- Limited test planning: We were missing the opportunity to gather information from the community on the most wanted tests.

Therefore, we modified the workflow in order to address these two issues.

- We started implementing all charms tests together, so we could run them in a batch. This way, I could have some other tests implemented, while I was waiting for the test run to finish.

- We started publishing the test plan as a bug on the Launchpad platform a few days before implementing them, so we were able:

- give the community the opportunity to improve and correct the list

- engage the community in the practice of implementing tests, as they are crucial for a solid platform

Tools used

This section features an overview of the tools used for the implementation.

Amulet

Amulet is a Python 3 library designed to test Juju Charms. It wraps the Juju command line tool with a pythonic interface and a proposed workflow for testing.

Charm tools

Charms are designed to simplify deployment, configuration, and exposure of services in the cloud. The Juju platform offers some tools to debug charms and understand how they behave. The entry point of this package is the juju command. The main options I’ve used:

juju deploy <charm>: It allowed me to test the charm as it comes without much research.juju debug-log: While running tests and deployments, this command gives you access to the logs of all the machines in one chronological log.juju debug-hooks <charm> <hook>: To understand how hooks work, I used this to break a specific run and inspect what data the charms were exchanging.

For more info, check out the charms documentation.

Charm helpers

The charmhelpers Python library is a collection of functions that simplifies the development of charms. It also enables Charm Test Authors to easily interact with the deployment.

The main use cases where I applied this library were as follows:

- running commands in a specific unit

- reading config files

- checking if a process is running

- checking open ports

For more info, check out this website.

Canonical/Juju online tools

Canonical provides certain tools that simplify coordination between developers.

a) The review.juju.solutions queue is used to coordinate the charmers launchpad group. The main task of this tool is to review merge proposals in the charm repository. The review queue also allows reviewers to run the tests batteries against the new branches, verifying that merge proposals don’t break anything before the merge.

b) Launchpad is an all-in-one project management tool that helps Canonical and free software advocates to coordinate their work. It features bazaar code repositories per project, issue tracking, and other functionality. If you plan to implement a test for a charm, you will have to check the charm’s project page, clone the project’s bazaar repository, push your branch into bazaar, and ask for a merge proposal. Don’t forget to create a user in Launchpad before taking these steps and completing your registration. There is a particular point that is not pretty clear—the signage of the Ubuntu Code of Conduct. To do it, you need to follow the steps specified in this guide.

c) IRC is the preferred way of communication between the team members of the Juju project. The channel is irc://irc.freenode.net:6667/#juju. You can use a Freenode Webchat Client, if you are not used to IRC.

Conclusions

As you see, the implementation of integration tests for the Juju platform was successful, but not without its bumps and jumps. Still, if you follow these recommendations, you will have a pleasant experience with Juju Charms, an incredible tool for deploying and maintaining services online.

Further reading:

- How to Deploy, Customize, and Upgrade Juju Charms for Cloud Foundry

- Deploying Cloud Foundry in a Single Click with Juju Charms

- Cloud Foundry Deployment Tools: BOSH vs. Juju Charms

About the author

Nicolás Pace is Senior Python Developer at Altoros. With 10 years of engineering experience, he drives project planning, architecture design, app deployment, and team management. Nicolás has successfully led a variety of cross-functional projects, reaching organizational objectives and mentoring other developers. He is proficient in resolving complex technical issues, possessing deep knowledge of open-source tools that enable developers to quickly prototype and test business systems.

Nicolás Pace is Senior Python Developer at Altoros. With 10 years of engineering experience, he drives project planning, architecture design, app deployment, and team management. Nicolás has successfully led a variety of cross-functional projects, reaching organizational objectives and mentoring other developers. He is proficient in resolving complex technical issues, possessing deep knowledge of open-source tools that enable developers to quickly prototype and test business systems.