Data Integration: ETL vs. Hand-Coding

(Featured image credit: TDWI)

Why create custom data integration software?

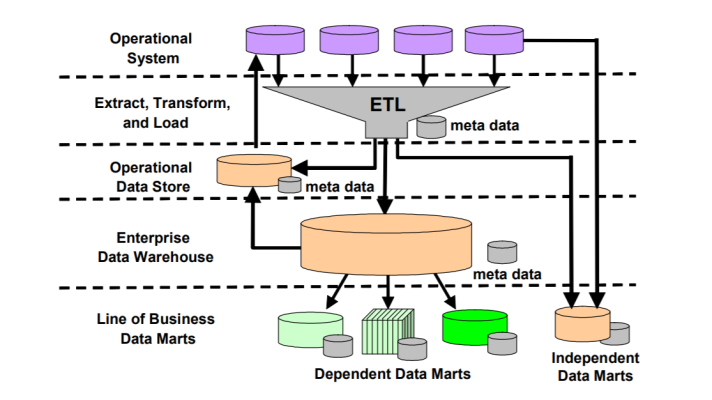

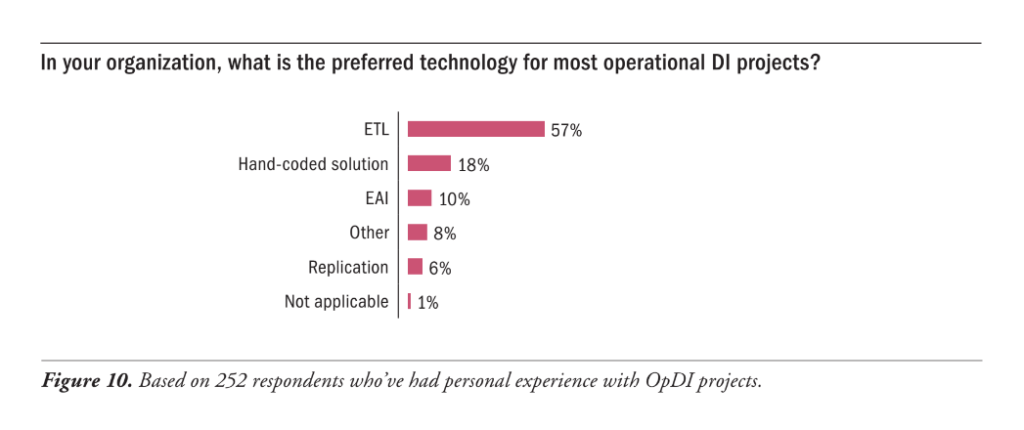

Gathering and transforming information from one location and putting it into another location has always been, and still is, the major task for data integration. However, many companies keep hand-coding, which is, according to a BI expert Rick Sherman, an outdated practice. Here is his explanation (from part 1 and part 2 of his series).

According to Rick, hand-coding is complex, because the amount of data increases and tasks become more complex. Numerous pages of SQL code are needed for each data source, therefore multiple scripts responsible for gathering data from different sources are hard to keep up-to-date. Moreover, this approach becomes more and more expensive in the long-term perspective, while the productivity decreases.

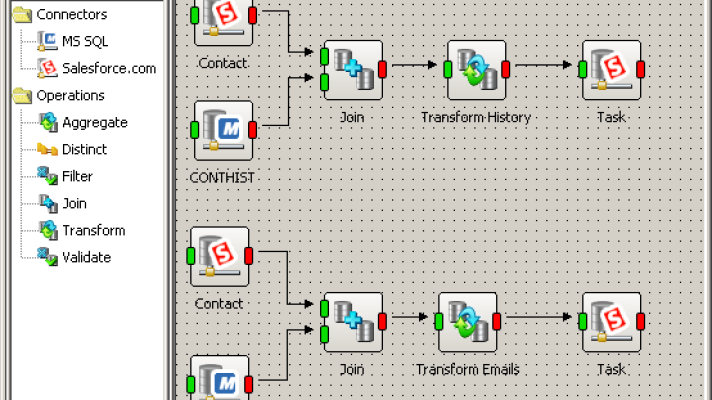

On the contrary, ETL tools, which may seem costly initially, use most of common processes, have many possibilities for transformation, and empoy prebuilt options to meet different levels of data integration tasks. Many products offer functionality for data migration, data profiling, data quality, application consolidation, etc., to turn data into “comprehensive, consistent, clean, and current information.” So, in the long run, they turn out to be more productive and cost-efficient, since programmers don’t waste their time and your budget.

Image credit

Image creditBesides, the times of extremely expensive ETL tools have passed. Today, various offerings are available for different budgets, configurations, and needs. Furthermore, there’s a range of open-source data integration tools, which are, according to experts from Gartner, are really a good choice for standard ETL tasks.

Data integration vendors could be more visible

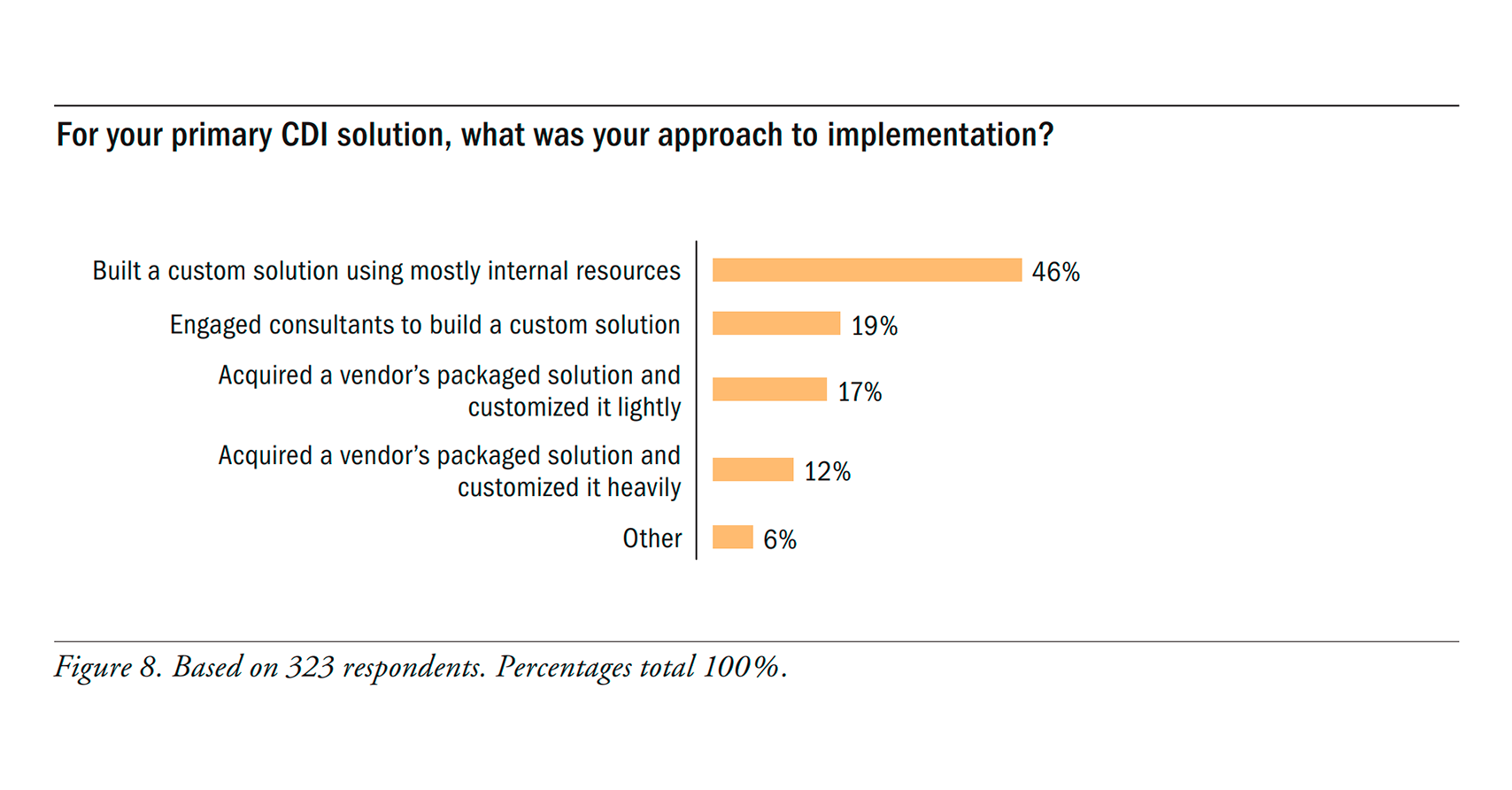

Still, in another very interesting piece, Rick notes that today’s ETL market is limited by a small number of providers. However, that’s not because there’s nobody apart from those companies, but because many data integration vendors are simply invisible to potential buyers.

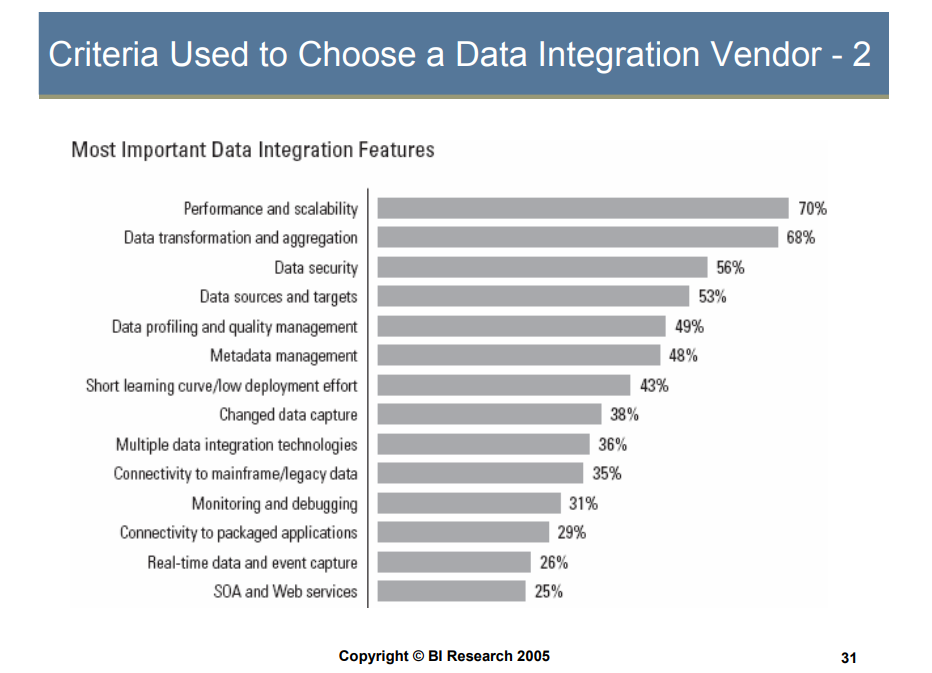

I agree, I often read complaints on the web about large expensive data integration offerings, with wide functionality that a company looking for a data integration solution simply can’t afford. Moreover, very often, such companies don’t need all that functionalities; their needs are not likely to go out of the ETL scenarios. However, choosing a data integration tool to answer their needs, as well as the budget, is a challenge.

Essential data integration features (image credit)

Essential data integration features (image credit)At the same time, many vendors providing data integration and ETL tools are something, as Rick says, “between the mega-products and the bundled tools in terms of functionality and total cost of ownership,” known to quite a small audience.

What’s the way out? ETL buyers should make some more effort and do not limit themselves to several best-known vendors. At the same time, Rick called data integration vendors to come out of the shadows and become more recognizable.

Further reading

- Gartner Suggests Rationalizing Data Integration Tools to Cut Costs

- Reducing ETL and Data Integration Costs by 80% with Open Source